Documentation Index

Fetch the complete documentation index at: https://docs.evolink.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview

CodeBuddy and WorkBuddy are AI tools launched by Tencent Cloud that support custom AI model integration throughmodels.json configuration files.

By integrating them with EvoLink API, you can directly use various AI model capabilities provided by EvoLink.

CodeBuddy and WorkBuddy use the same configuration method. This document applies to both.

Prerequisites

Get EvoLink API Key

- Log in to EvoLink Console

- Find API Keys in the console, click “Create New Key” button, and copy the generated key

- API Key usually starts with

sk-, please keep it safe

Configuration Steps

1. Open Configuration File

CodeBuddy:~/.codebuddy/models.json

WorkBuddy: ~/.workbuddy/models.json

2. Add Evolink Model Configuration

Edit themodels.json file and add the following configuration:

Currently only supports OpenAI SDK format API integration.

More Available Models

In addition to the above examples, you can add the following models (same configuration format, add “Evolink ” prefix to name field): GPT Series:gpt-5.2- Evolink GPT-5.2gpt-5.1- Evolink GPT-5.1gpt-5.1-chat- Evolink GPT-5.1 Chatgpt-5.1-thinking- Evolink GPT-5.1 Thinking

gemini-2.5-pro- Evolink Gemini 2.5 Progemini-2.5-flash- Evolink Gemini 2.5 Flashgemini-3-pro-preview- Evolink Gemini 3.0 Progemini-3-flash-preview- Evolink Gemini 3.0 Flash

doubao-seed-2.0-pro- Evolink Doubao Seed 2.0 Prodoubao-seed-2.0-lite- Evolink Doubao Seed 2.0 Litedoubao-seed-2.0-code- Evolink Doubao Seed 2.0 Code

kimi-k2-thinking- Evolink Kimi K2 Thinkingkimi-k2-thinking-turbo- Evolink Kimi K2 Thinking Turbo

3. Save and Restart

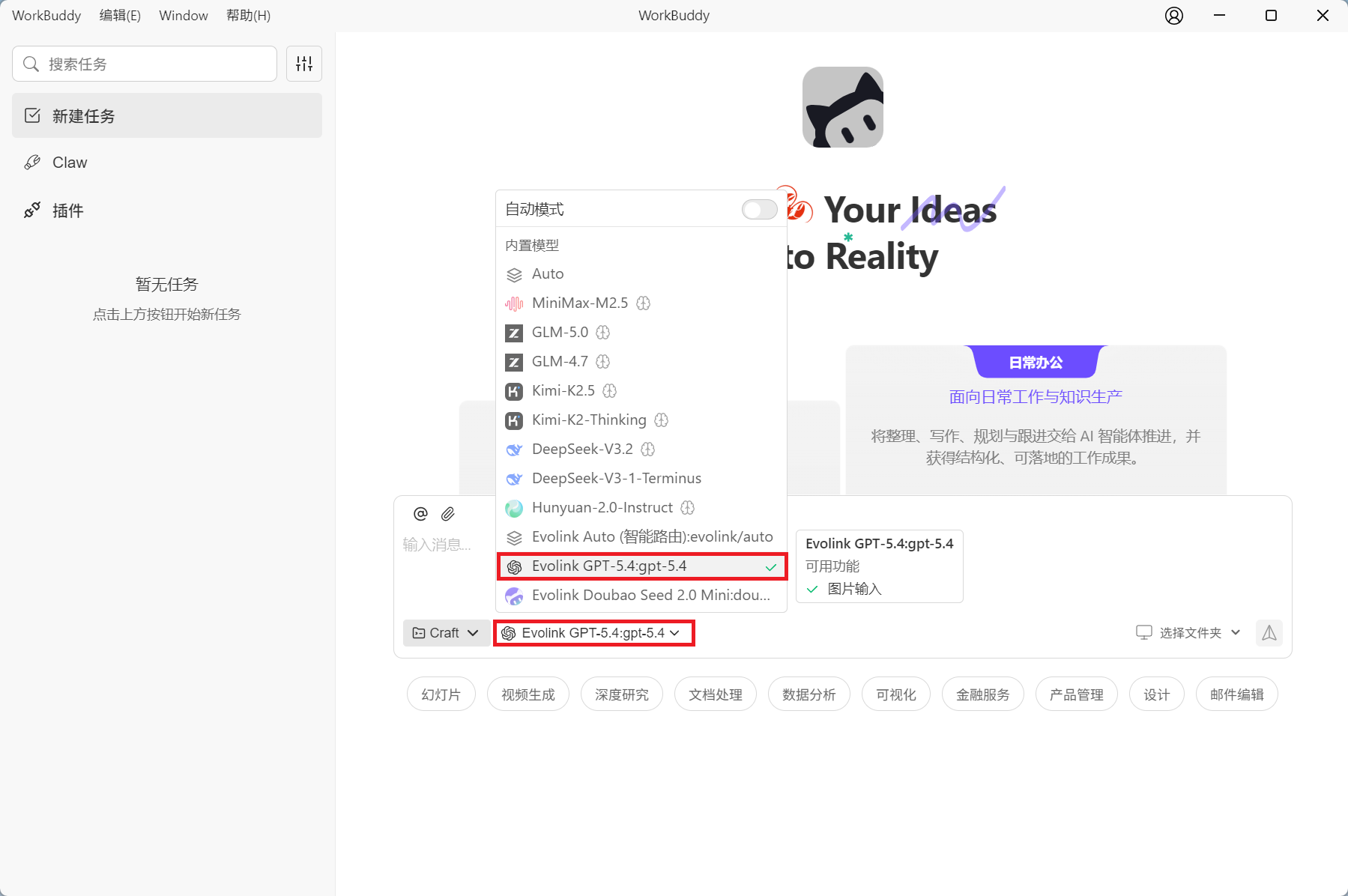

After saving the configuration file, the tool will automatically detect configuration changes and reload (1 second debounce delay). After configuration is complete, you can see all configured Evolink models in the model selection dropdown:

Using Evolink Auto Smart Routing

What is Evolink Auto?

Evolink Auto is an intelligent model routing feature that automatically selects the most suitable AI model based on your request content.Core Advantages

- Smart Matching: Automatically analyzes request content and selects the most suitable model

- Cost Optimization: Prioritizes cost-effective models while ensuring quality

- Load Balancing: Automatically distributes requests among multiple models to improve system stability

- Transparent: Returns the actual model name used in the response

Usage

Select “Evolink Auto (Smart Routing)” in the model selection dropdown.Limit Available Model List

If you only want to display specific models in the dropdown, you can use theavailableModels field:

FAQ

1. Where is the configuration file?

CodeBuddy:- macOS/Linux:

~/.codebuddy/models.json - Windows:

C:\Users\<username>\.codebuddy\models.json

- macOS/Linux:

~/.workbuddy/models.json - Windows:

C:\Users\<username>\.workbuddy\models.json

2. Does it support project-level configuration?

Yes. In addition to user-level configuration, you can create configuration files in the project root directory: CodeBuddy:<project-root>/.codebuddy/models.json

WorkBuddy: <project-root>/.workbuddy/models.json

Project-level configuration has higher priority than user-level configuration. It is recommended to configure global models at the user level and project-specific models at the project level.

3. What if the configuration doesn’t work?

- Check if the JSON format is correct (use a JSON validator)

- Confirm the API Key is correct

- Try restarting the application